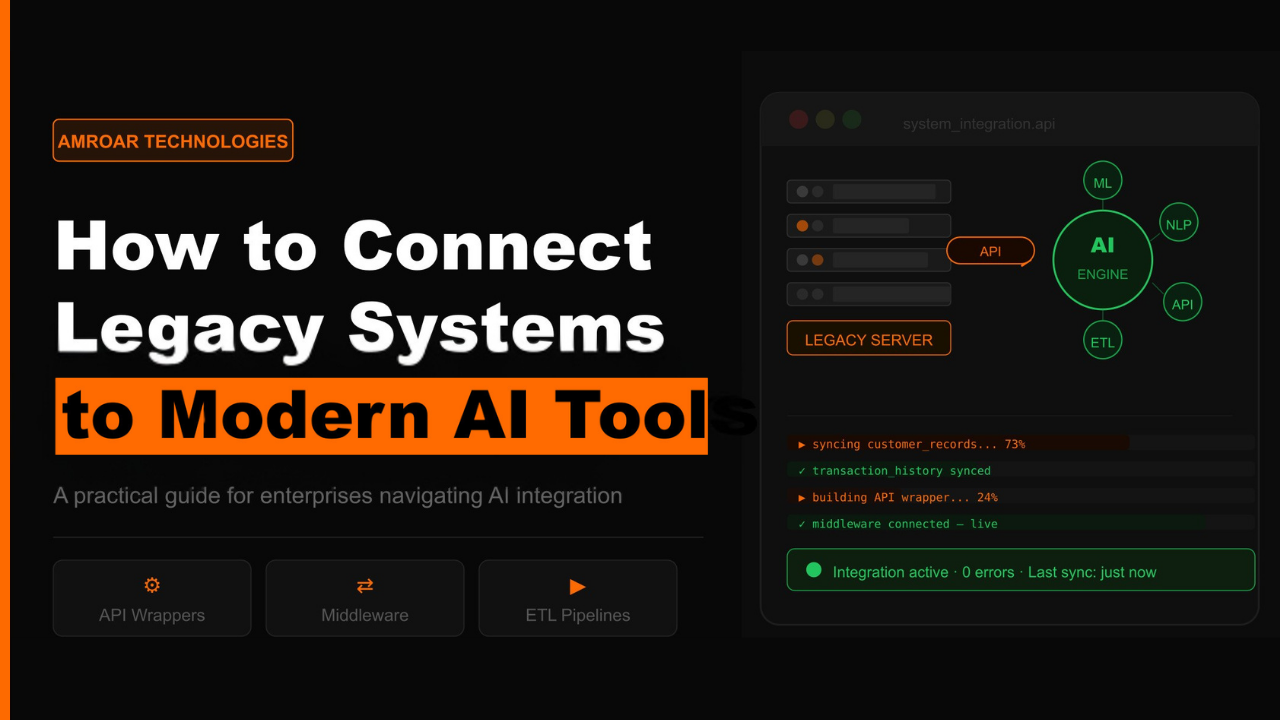

How to Connect Legacy Systems to Modern AI Tools?

Legacy system modernization is one of those projects that almost every growing business knows it needs to tackle — and almost every growing business keeps pushing to next quarter. The old systems work. They hold years of critical data. And the idea of touching them feels like opening a box that might not close again. But here’s what that hesitation is actually costing. Every month those legacy systems stay disconnected from your modern AI tools is a month your business is making decisions on incomplete information, running processes that could be automated, and leaving efficiency gains on the table that your competitors are already capturing.

The good news is that connecting legacy systems to modern AI doesn’t have to mean replacing everything. In most cases it doesn’t even come close. This blog walks through why the integration challenge exists, the approaches that actually work, and how to move forward without the chaos most businesses are afraid of.

Why Is Legacy System Modernization Such a Common Struggle?

Legacy systems weren’t built to talk to anything outside their own world. They were designed in a different era — when batch exports, proprietary protocols, and siloed databases were the norm and nobody was thinking about connecting them to AI tools that didn’t exist yet. Modern AI platforms, on the other hand, expect real-time data streams, clean structured formats, and API connections. That gap between what legacy systems speak and what modern tools expect is where most integration projects run into trouble.

And it’s worth being honest about why businesses hold onto these systems in the first place — because the reasons are completely valid. Replacing a legacy system isn’t a project, it’s a program. It takes years, costs more than anyone budgets for, and carries a failure rate that keeps CIOs up at night. Many legacy systems also do specific things extremely well that modern software doesn’t replicate easily. And critically, the data inside them is often irreplaceable — years of transaction history, customer records, and operational data that modern AI tools need access to in order to be genuinely useful.

The real cost of doing nothing:

- AI tools making decisions on partial data because the full picture is locked in a disconnected system.

- Manual processes bridging gaps that technology could handle automatically.

- Teams working from different versions of the truth because data lives in too many separate places.

- Competitors who have connected their systems pulling ahead on speed, accuracy, and customer experience.

What Actually Makes the Legacy Integration Challenge Hard?

The technical barriers are real — but they’re not mysterious. Understanding them is the first step toward choosing the right approach for your situation.

1. Legacy Systems Don’t Have a Modern Entry Point

Modern enterprise system integration is built around APIs — standardized doors that systems use to exchange data. Most legacy systems don’t have one, or have something that was technically called an API when it was built but behaves nothing like what a modern AI tool expects. This is where the majority of legacy integration projects hit their first wall. The obvious entry point everyone assumed would be there simply isn’t.

- Legacy databases often expose data through flat files, batch exports, or proprietary protocols that modern tools can’t read natively.

- Building an API wrapper around the legacy system — creating a modern interface without touching the core — is often the cleanest solution.

2. The Data Is Inconsistent and Nobody Warned You

AI tools need clean, consistent, well-structured data to function properly. Legacy systems almost never have it. Fields that mean different things in different contexts. Records entered inconsistently across years of different staff. Duplicates nobody cleaned up because the old system didn’t flag them. When an AI tool tries to learn from or act on this data, the gap between what exists and what’s needed becomes painfully obvious.

- Data transformation — cleaning, normalizing, and mapping legacy data to formats modern tools understand — is often the most time-consuming part of the project.

- Investing in data quality before integration begins pays off significantly — AI making decisions on clean data is a completely different experience from AI working off inconsistent records.

3. Opening Old Systems Creates New Security Exposure

Legacy systems were built to be closed. The moment you create an integration pathway into one, you’re also creating a potential entry point into infrastructure that was never designed to be externally accessible. For businesses handling sensitive customer, financial, or operational data, this deserves serious thought — particularly when the AI tool you’re connecting to lives in the cloud.

- Every integration point is a surface that needs to be secured with the same seriousness as the systems it connects.

- Data flowing between legacy infrastructure and modern AI tools needs encryption in transit — something legacy systems rarely handle natively.

- Security needs to be designed into the integration architecture from day one — not added as an afterthought when someone raises the concern.

The Legacy System Modernization Approaches That Actually Work

There’s no single right answer here — the best approach depends on what the legacy system does, how critical it is, and what the AI tool actually needs from it. But these four patterns show up consistently in projects that succeed.

1. Build an API Layer — The Safest First Move

Rather than touching the legacy system itself — which is always risky — you build a modern API layer that sits in front of it. The legacy system keeps doing exactly what it does. The API layer translates requests from modern AI tools into something the legacy system understands, and sends the responses back in a format modern platforms can work with. The legacy system stays untouched. The AI tool gets a clean, modern interface. And when the legacy system eventually does get replaced, the API layer just gets pointed at the new system.

- Lowest risk approach — no changes to the core legacy system.

- Future-proofs the investment — when the legacy system is replaced, the integration architecture stays.

2. Use a Middleware Platform for Multiple Systems

When a business has several legacy systems that all need to connect to modern AI tools, building individual point-to-point connections quickly becomes unmanageable. Middleware platforms like MuleSoft sit between all the systems — handling the data transformation, routing, and protocol translation — so everything connects to the middleware layer rather than directly to each other. Add a new system, connect it to middleware. Remove an old one, disconnect it. Clean, scalable enterprise AI integration.

- Centralizes integration management — one layer to monitor and maintain rather than dozens of separate connections.

- Pre-built connectors for common legacy systems reduce the amount of custom development needed to get started.

3. Build a Data Pipeline When Real-Time Isn’t Required

Sometimes the AI tool doesn’t need live access to the legacy system — it just needs the data that’s been accumulating there for years. Building a data pipeline that extracts, transforms, and loads legacy data into a modern store the AI can query is often the most practical approach for AI training, analytics, and knowledge base use cases. The legacy system stays exactly as it is. The AI gets a clean, structured dataset to work from.

- ETL pipelines run on a schedule — hourly, daily, or on-demand depending on how current the data needs to be.

- Data transformation happens at the pipeline level — cleaning and structuring without requiring any changes to the legacy system itself.

4. Phased Legacy System Modernization — When the System Eventually Needs to Go

For businesses that know a legacy system needs replacing but can’t afford the disruption of doing it all at once, phased modernization is the path that keeps the business running while progress happens. You identify the components the AI integration needs most urgently, modernize those first, connect them to the AI tools, and keep going piece by piece. Old and new run in parallel during the transition. Each phase delivers something useful. You’re not spending a year building before anyone sees a result.

- Start with the data and functions the AI needs most — not the easiest to migrate.

- Running old and new in parallel removes the all-or-nothing risk of a full cutover — the business keeps operating throughout.

Why These Projects Need Someone Who Has Done It Before

Legacy system integration projects have a reputation for going over budget and under-delivering — and that reputation exists for a reason. The technical complexity is real, but most projects that go wrong do so because the people doing the work didn’t fully understand what they were getting into before they started. Legacy systems are full of surprises. The ones that don’t catch you off guard are the ones somebody has already seen before.

Amroar Technologies brings exactly the combination these projects need — deep experience in enterprise system integration and real expertise in modern AI implementation, with the judgment to know which approach fits which situation. Whether it’s an API layer around an aging ERP, a middleware platform connecting multiple legacy systems to a modern AI stack, or a phased legacy system modernization roadmap that keeps the business running throughout — Amroar has worked through these challenges across industries and knows where the traps are before walking into them.

If your business is sitting on years of valuable data locked in systems that aren’t connected to your AI tools yet — that gap is costing more than you probably realize. And it’s a solvable problem.

Final Thoughts

Legacy system modernization isn’t the most exciting part of an AI strategy — but it’s often the part that determines whether the AI actually delivers. The tools are only as good as the data they can reach. And for most businesses, the most valuable data is sitting inside systems that were never designed to share it with anything modern. That doesn’t make it impossible. It makes it a project worth approaching properly.

What to take away:

- Legacy system modernization doesn’t mean replacing everything — the right integration approach connects what you have to what you need.

- API layers, middleware platforms, data pipelines, and phased modernization are all proven paths — the right one depends on your situation.

- Data quality matters as much as connectivity — clean data going into AI produces useful outputs, messy data doesn’t.

- Security needs to be designed into the integration from the start, not treated as an afterthought.

- The right partner has seen these systems before and knows where the surprises are — which is worth more than any methodology document.

Your legacy systems hold years of business intelligence. Modern AI tools are ready to use it. The only thing between them is the right integration strategy.

Comments are closed